How Google NanoBanana Works? (In Simple English)

Quick Answer

Google NanoBanana (Imagen 3) generates images by predicting pixels sequentially — starting from one pixel and predicting each next one based on all previous pixels, the same way ChatGPT predicts the next word in a sentence. This autoregressive approach produces more accurate, consistent results than older diffusion models, which worked by removing noise from static. The trade-off is speed: pixel-by-pixel generation is slower, but anatomical errors like extra fingers, common in diffusion models, are dramatically reduced.

AI image generators like Google NanoBanana have a come a long way. From multi-fingered monstrosity to now replacing photoshop. The progress has been quick and show no signs of stopping. It is a very powerful technology. However, to use them effectively, you need to know how they work.

In this post, I will explain in simple terms how these technologies work.

How it used to be

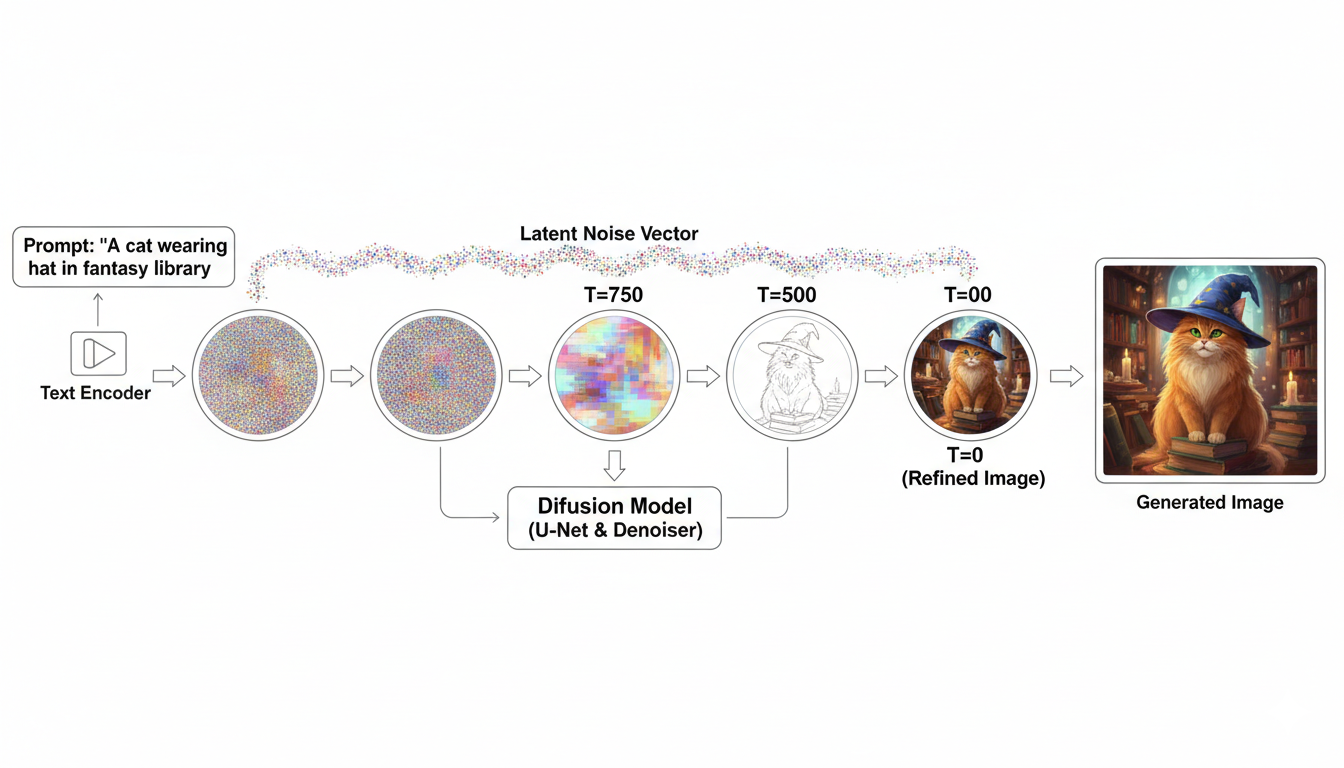

Before learning about how it works now, we must first learn what came before. Image generation exploded around 2023. With models like OpenAI’s DALLE and Stability’s StableDiffusion. These were “Diffusion models”. They generate images like a painting.

If you have seen famed American TV personality Bob Ross paint a landscape, you will know that he would paint on layers that seem just simple brushstrokes until it all came together into a complete image.

StableDiffusion works the same way. When prompted with text, they first generate “noise”. Like static on an old TVs(kids, ask you parents). They then try removing different patterns of the noise, checking which ones takes it in the right direction. Like Bob Ross painting on new layers. It keeps “diffusing” the noise until the desired image shows up.

Phantom Hands

There is a problem with diffusion models is that they tend to be inconsistent. They tend to lack “awareness” of their subject. This ends up with images of people with 8-finger hands and other uncanny features.

The inconsistencies also means that they are not very good at editing photos or iterate over their own generated images. This is a fundamental limitation of this technology. We needed a different approach. And it came from an unexpected place; text generation.

How ChatGPT Works

Please, please, don’t go! I promise this is important.

ChatGPT, and other Large Language Models(LLMs), are “word predicting machine”. They take the prompt you give them, and then calculate the most likely next word given all the data. Much like the autocorrect on your phone. But unlike the autocorrect it calculates the next word based on all the other words that came before. I wrote a more detailed(but with simple language) write-up on my personal blog.

This idea is the secret sauce behind how these new image models works.

How NanoBanana works

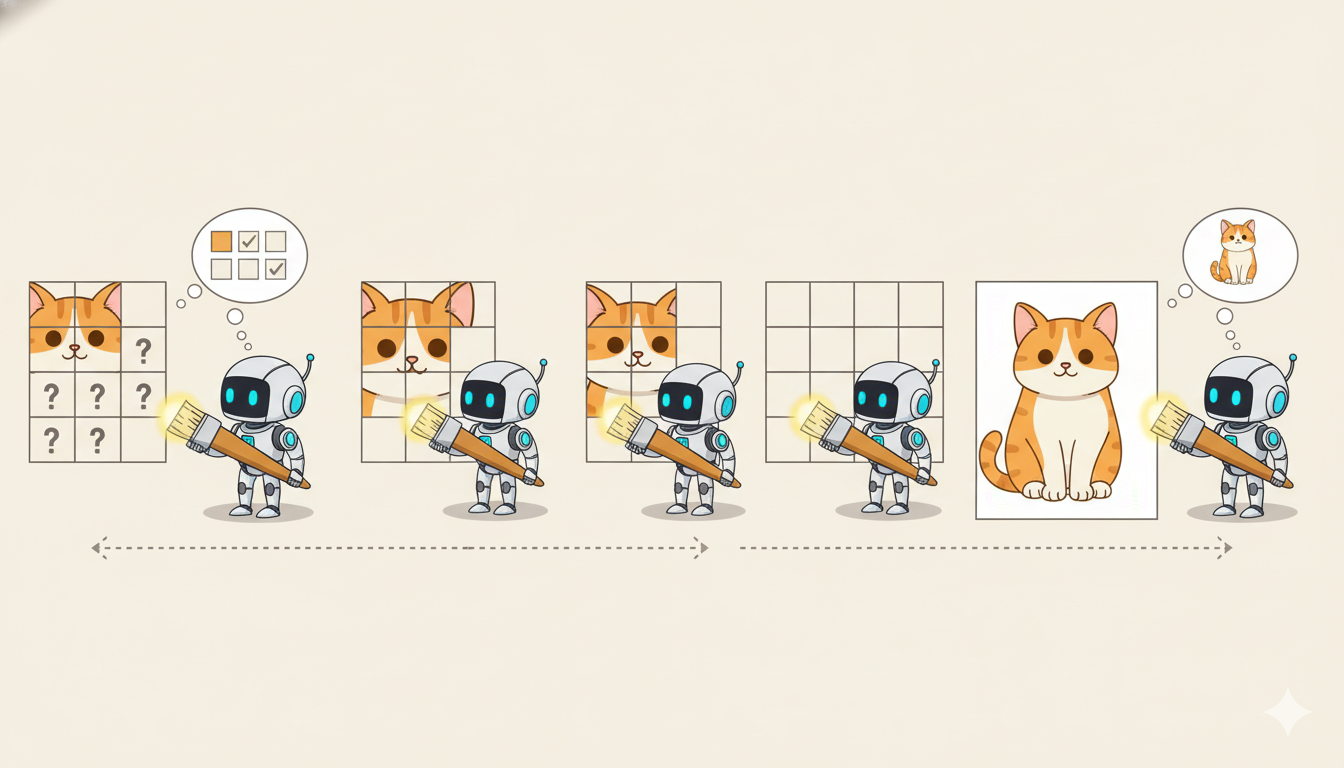

NanoBanana, and ChatGPT Image, do to pixels what ChatGPT does to words. It starts with a pixel and then predicts the next possible pixel color. Then the next based on the previous 2. And so on.

This is a slower than diffusion, but the results are so much better.

That’s it?

Pretty much. There are a lot of complicated nuances when you go down in the mathematics and science. However, in the abstract this is how mage generation works. Even our own at Brandize. We use this tech to facilitate our users’ graphic design needs. All the logo generation, revisions, and vectorizations are all generated using these advanced models.

We use our own secret sauce of embeddings and prompt construction. You can create a logo for free, with just a click of a button. Get 20% off your first order.

Ready to create your logo?

Generate a professional SVG + PNG logo in under 30 seconds.